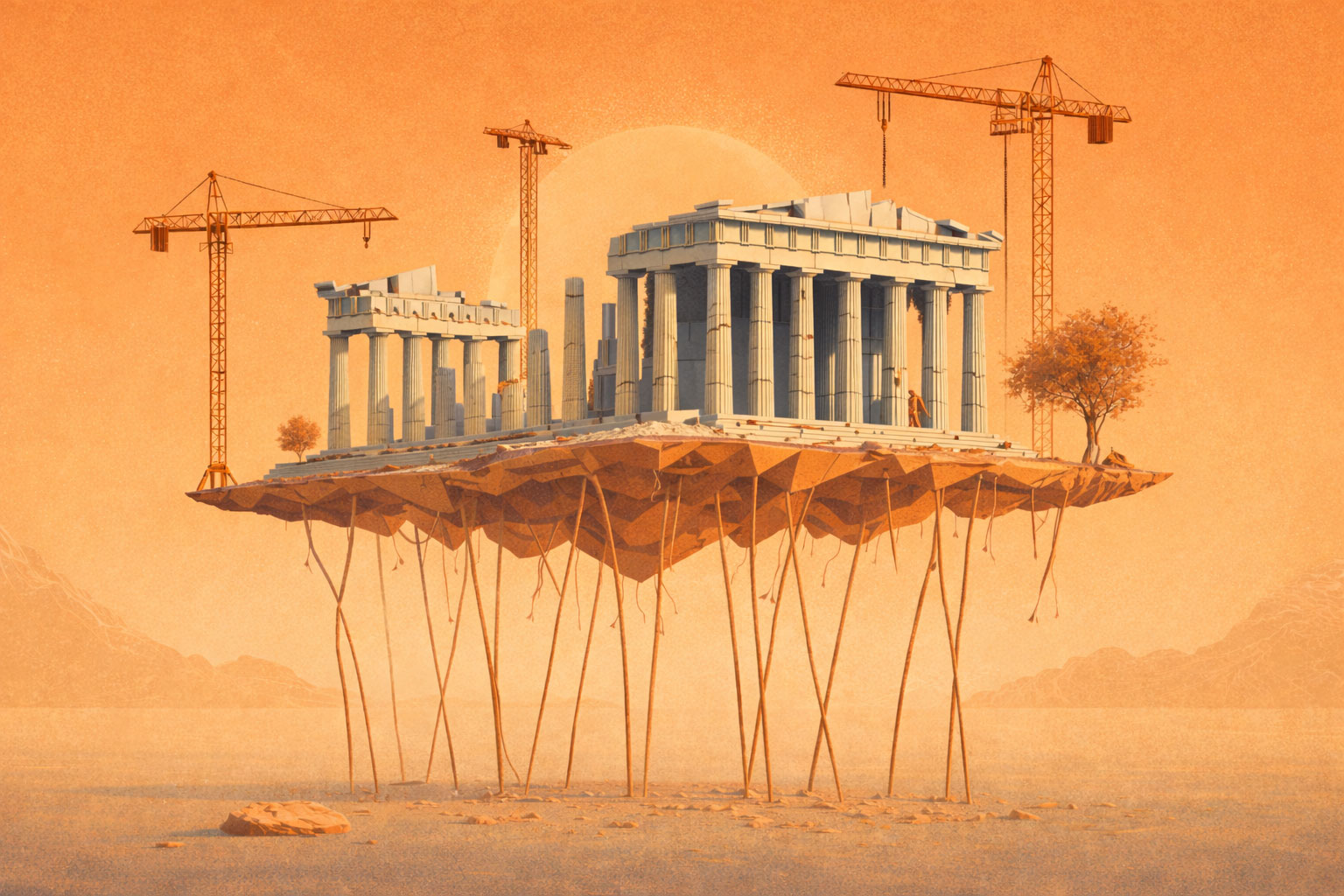

AI is transitioning from experimentation to core engineering operations, where impactful decisions actually happen. Executives are under pressure to show real returns, not impressive demos, while employees are losing patience with tools that slow them down instead of making them more effective. In this environment, weak foundations are no longer a background issue. They directly determine whether AI earns trust, scales, or quietly loses traction.

AI systems only perform as well as the information they can access and the knowledge that contextualizes it. In most enterprises, that information is fragmented, outdated, and unowned, while the knowledge needed to apply it has never been documented. AI is asked to operate on top of it anyway, which is why early demos look promising and real usage falls apart.

Fixing this does not mean rebuilding your entire documentation system or asking every team to document everything. It means identifying the small set of information AI already relies on and making it accurate, current, and clearly owned. More importantly, it means running a deliberate knowledge management process that captures the experience and decision-making context currently locked in people's heads and turns it into something AI can actually use.

This is an operating model problem, not a tooling problem. And it will not solve itself. The assumption that AI can be pointed at a large pile of accumulated content and magically synthesize it into usable knowledge is the most persistent misconception in enterprise AI today. That synthesis — from raw data to structured information to applied knowledge — requires intentional process at every step.

Most organizations use data, information, and knowledge interchangeably, which is exactly where things go wrong. Data is raw and unstructured. Information is data that has been processed to carry context and meaning. Knowledge is what happens when someone applies information through experience to make a decision or solve a problem. Knowledge also divides into explicit knowledge, which can be written down and shared, and tacit knowledge, which is personal, experiential, and notoriously hard to formalize.

That last transition — information to knowledge — is the one AI cannot do on its own, and it is the one most strategies skip entirely. Each layer requires deliberate design to move to the next. Without that, AI simply accelerates bad outputs.

Across internal work and client implementations at SPAN Digital, the failure pattern is consistent. AI pilots launch quickly. Initial demos look promising. Then answers start coming back wrong, inconsistent, or unverifiable. Trust erodes. Usage drops. Humans go back to asking humans.

The AI did not break. The information infrastructure underneath it was never production-ready, and the knowledge it needed was never captured in the first place.

Most enterprises still treat information as static documentation scattered across wikis, tickets, shared drives, and Slack threads. That is inefficient for people. For AI, it is fatal — especially when the knowledge needed to interpret and apply that information was never documented at all. AI does not compensate for messy information or missing knowledge. It amplifies both.

Independent research points to the same root cause.

Gartner consistently cites poor data readiness and weak knowledge practices as leading reasons AI initiatives fail to move beyond pilots. McKinsey research shows fewer than one-third of AI initiatives deliver meaningful business value, with organizational and foundational issues cited more often than model limitations. IBM studies on enterprise AI adoption highlight trust, explainability, and data quality as the primary barriers to scale.

Different firms. Same conclusion. AI struggles when the data and information environment is not production-ready and critical knowledge remains undocumented.

At SPAN, we ground our approach in the distinction between data, information, and knowledge described above. The model is not new. What most organizations miss is the assumption that AI automatically moves them up the stack — that throwing a model at accumulated content will somehow synthesize data into information and transform that information into knowledge. It will not.

The harder problem is capturing knowledge: synthesizing the context, decisions, and experience that live across a team and making it available as structured information that can be delivered to people and AI systems at the point of need — when a decision is being made, when a problem is being solved, when an engineer is troubleshooting at 2am. This requires a knowledge management process — deliberate, ongoing, and owned. It does not happen organically, and it certainly does not happen by pointing an AI at a decade of accumulated documentation.

If AI is embedded in engineering, operations, or decision workflows, then information and knowledge have to be treated with production discipline: accuracy is owned, freshness is enforced as systems and decisions change, and sources are constrained to what is trusted and explainable.

But here is the part that production discipline alone does not cover: even with strong foundations, AI will sometimes be wrong. Just as a knowledgeable person occasionally gives an incorrect answer, an AI system operating on good information will still produce outputs that need checking. "Trust but verify" has to be the operating mantra.

Every good newspaper has a fact-checking department. The review and correction processes that most organizations have historically implemented in a half-hearted manner must now be institutionalized. Feedback loops must exist so that errors improve the system rather than undermine trust. When these conditions are missing, AI output becomes confident noise — and that is when executive trust disappears.

This is why many AI programs stall after the pilot phase. The organization invested in intelligence without investing in the information infrastructure, the knowledge capture process, or the review discipline it depends on.

Engineering is where this breaks fastest. The failure is rarely missing data. It is missing context and decisions that were never written down. Onboarding, troubleshooting, and legacy systems expose these gaps immediately, especially when critical context lives in a few senior engineers' heads.

Before AI: Architectural decisions live as outdated documentation or undocumented understanding. Legacy systems are understood by a small number of long-tenured engineers. New hires ramp by interrupting the same people repeatedly. Troubleshooting depends on who happens to be available and what they know.

After AI, without knowledge management: An engineering copilot is introduced. It provides generic responses based on outdated information. It misses edge cases because the knowledge about them was never documented. Engineers stop trusting it and revert to Slack pings and escalation.

After AI, with knowledge management: Core systems, decisions, and failure modes are explicitly captured as structured information. Ownership is assigned for keeping it current. AI is constrained to authoritative sources only. Gaps surfaced during onboarding and incidents feed back into the system. Review and correction processes catch errors before they erode trust.

In practice, this reduces ramp time, lowers interruption load on senior engineers, and shortens incident resolution. Not because the model changed, but because the knowledge was made explicit and the information was structured to be usable.

A reasonable objection: "This sounds like more work."

It is different work, not more work. The effort shifts from generating output to curating good content and reviewing what AI produces. Less time writing from scratch, more time ensuring what exists is accurate and complete, more time verifying AI outputs against reality. The overall cycle is still faster — often significantly so — but the distribution of effort changes. Organizations that expect AI to eliminate work rather than redirect it will be perpetually disappointed.

This work is intentionally narrow. It focuses on the specific information and knowledge that AI already depends on in real workflows, not on documenting everything or redesigning how the entire organization works.

The test is simple:

If a critical answer matters to decisions or execution, someone must own the underlying information and ensure the relevant knowledge is captured. They must be accountable for keeping it usable through review and correction. In practice, this often means developing narrowly scoped agents that draw from curated information and knowledge to support specific decisions — not general-purpose systems trained on everything.

Doing nothing feels cheaper than fixing knowledge foundations, but the costs show up quickly. AI investments fail to gain adoption. Onboarding slows. Senior engineers become bottlenecks. Leaders stop trusting AI-driven outputs. Teams compensate by creating shadow processes and workarounds, which further erode confidence. By the time this becomes visible, the damage is already done.

Pick one workflow where AI is already live or actively being piloted — engineering onboarding or incident response work well. Inventory the data and information sources that workflow depends on and identify which ones the AI is allowed to use. Pay special attention to critical knowledge — decisions, workarounds, edge cases — that exist only in people's heads and have never been documented.

For each source, answer three questions: who owns accuracy, how often it is updated, and what happens when it is wrong. For undocumented knowledge, identify who holds it and whether it has been captured.

Then sample real AI outputs from the last two weeks and trace them back to their source material. If you cannot confidently explain why an answer was given or who is accountable for fixing it, you have found the constraint. Do not expand scope. Fix that one domain first — capture the missing knowledge, structure the information properly, put review processes in place — and measure whether trust and usage improve.

If you are a CIO or CTO, start with one question:

Where is AI already being used, and who owns the information it accesses and the knowledge it needs to apply that information correctly?

If the answer is unclear, that is the work. Not the next model release. Not another pilot.

Just making information and knowledge behave like infrastructure — and building the review discipline to keep it reliable.

Not glamorous. Just necessary.

Gartner — AI failure due to lack of AI-ready data and foundations. Gartner predicts 60% of AI projects will be abandoned by 2026 if they are not supported by AI-ready data and governance. Link. Gartner also predicts 30% of generative AI projects will be abandoned after proof of concept by end of 2025, citing poor data quality, unclear value, and governance gaps. Link

McKinsey — AI adoption is high, but scalable business value is limited. McKinsey State of AI 2025 findings show widespread AI adoption, but only about one-third of organizations have scaled AI beyond pilots. Link

IBM — Trust, explainability, and data quality as barriers to scale. IBM research identifies data accuracy, trust, bias, explainability, and governance as primary barriers to realizing AI value at scale. Link